Quantum SEO Content Strategies

Quantum? But why quantum? Because these strategies might work or might not work. It all depends on how you measure them, get it? Anyway, do you know what’s the biggest cliché in the SEO game? I think it’s pretty clear who the winner is, because if you haven’t heard the phrase “Content is King” in the last few years, you must have lived under a rock. Or maybe you are new to SEO? Either way, you are very lucky.

This short e-book you are looking at is an exhaustive, yet easy-to-read and digest guide to everything you need to know about the SEO side of your venture. It will help you make the best decisions regarding content and, believe me, this knowledge is essential. “Content is King” is not a cliché for no reason.

But what I’m about to teach you isn’t just the basics. Oh no! There are also some spicy things waiting for you. I promise you that even if you have been doing SEO for the last 10 years you will still learn something new and, by the end, will have blown your own mind.

So are you ready for an adventure? Let’s start with the beginning…

The History Lesson

Have you ever seen one of those “celebrities before they were famous” pics on the web? In our case the celebrity is called Google, and the picture sets up the atmosphere for a 15-year journey back in time pretty well.

Back in the day, no one would have tried to convince you that content is the most important aspect of your marketing strategy. Simply because no one would have believed it to be true.

You see, even the first black-hat methods were related to content – link building was not yet a thing. Have you received advice on keyword density? We will touch on it later, but for now you should just keep in mind that the Google Panda update can totally demolish your rankings if you go overboard with the keyword stuffing – 5% and above is very risky, and some would say that even 2% can be too much.

Not in 2003 though. Oh, no. Winnie the Pooh would tell you – “The more, the more” – and that mindset could have done wonders for you at that point in time.

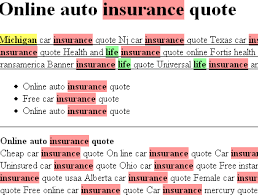

So where do you think people would put the limit on keyword stuffing? Place the keyword until the article is barely readable anymore? Nope. They’d go even further. Let’s look at the image below…

Five points to whoever guesses which keyword these webmasters were trying to rank for.

And no, it’s not “poker poker poker poker poker poker poker poker poker poker poker…”

In all seriousness though, “poker” is one of the most profitable keywords on the web and this website used to top google searches some years back.

You might be thinking “doesn’t this look disturbing to the visitors?”

The answer is, that it would in case they could see it. But the text is actually white – the same color as the background. The only way to see it was to accidentally select it as in the pic above, or view the HTML code. Holy blackhat, Batman!

People who have read Moz blogs a little bit too much might not believe that Google would fall for that – but they totally used to. And it actually took a while until they made this strategy unviable – mind you this was years before the first Panda update.

Obviously, only a select few knew how to game the system back then. It looks too simple and you might be thinking “oh, they had it easy, but now it’s too late for that and it’s not nearly as easy”. I beg to differ…

The moral of this story is – don’t give Google too much respect – they are constantly improving, but if you are ahead of the game, you will notice that gaming Google is totally possible in 2015.

This doesn’t mean that playing by the rules is not a viable strategy. It is, but you don’t really need us for that. What we are telling you is that it is not the only strategy.

And if you feel whitehat inside, that is perfectly fine, the next chapters will cover mostly whitehat strategies anyway…

You hear the bell ringing? History class is over. Hopefully you’ve had your fun, because it’s time to return to the present and get to work. Next we are going to the law class to see which ideas might get you penalized before we dive into the real nitty-gritty stuff of SEO content and most importantly, our secret hacks that almost no one knows about. It only gets better so stay tuned guys…

The Google Law Lesson

Let’s face it – you care about making the most out of your sites while not complying as much as possible with Google’s rules. And from time to time abusing some knowledge (hot tips are coming in a few pages) is cool. Doing the exact opposite of what Google wants you to do can be the right choice from time to time.

However there are some laws that should not be broken. This chapter is about all the things Google will surely punish you for. These are the things you shouldn’t even consider doing.

Keyword Stuffing

In the previous chapter I showed you an exaggerated version of the keyword stuffing tactic. However, the term “keyword stuffing” doesn’t describe only the extreme insolence, or marketing genius if you will, of those webmasters.

Finding keyword-stuffed sites was impossible in Google so we used Seobook and web archives. This says enough.

Keyword stuffing can be any level of overabundance of target keywords in your articles. As a general rule of thumb, if you don’t use at least two synonyms of your main keyword Google will get suspicious.

Keyword stuffing can be any level of overabundance of target keywords in your articles. As a general rule of thumb, if you don’t use at least two synonyms of your main keyword Google will get suspicious.

Besides, targeting long-tail keywords is a better idea anyway. Don’t be afraid that a certain phrasing does not appear to have any searches in Google Keyword planner – often this information isn’t true, and you’re most certainly missing tons of other long-tail keywords made up of the variety of words you have provided.

I would recommend that you do not overthink the keyword density problem – as long as your articles sound natural you’ll be okay.

If you want to check the keyword density anyway, a good tool to do so is the SeoQuake plugin. It is aimed at websites and their HTML pages, however what a lot of people don’t realize is that you can open .txt files with your web browser – and use this browser plugin efficiently.

Duplicate Content

This one is self-explanatory. It actually feels absurd that once upon a time it was possible to create a successful website just by clicking CTRL + C and CTRL + V. What’s more shocking is that this worked until somewhat recently.

Not anymore though. The Google Panda update is specifically designed to punish websites with poor content quality, and it has reformed things for sure.

Even as recently as 2013, Panda was run only once every 3-4 months – enough time to get a decent return of investment – your investment would be 15$ for domain + hosting, and a day of clicking copy and paste, and people would make hundreds if not thousands of dollars doing that.

As of today, Panda is part of the main Google algorithm and posting duplicate content buys you a first-class ticket to the out-of-the-SERPs train. Trust me, you don’t want to ride this one.

As long as you don’t copy other people’s articles, however, you should be fine. Eventually some sentences can be the same – heck, people often quote writers and scientists in their articles – the result is obviously some duplicate content. But Google knows this and there is no need to worry – Panda can easily tell a stolen article from an original one.

At one point in 2014, Panda was overdoing it – they punished lyrics sites so bad, that searching for example for “The Beatles lyrics” would return articles about The Beatles, containing the word “lyrics” in it – but no actual lyrics. Obviously the reason for that was that all lyrics sites had the exact same content and got penalized.

It was a mess-up, but Google acknowledged their mistake and fixed this promptly – so you shouldn’t expect them to do the same mistake twice.

Spun Content on Your Money Site

Ugh, God, no. Why would you even think of that?

Above you can see an example of a PR5 website homepage. I wonder what that was used for (tip: there’s links down this article).

Some international SEO adventurers claim that spun articles are not recognized as poor quality by Google in certain languages.

Not the case with English, though. And unfortunately the big money to be made on the internet is mostly in the US, so most of us are stuck with that. (Pro-tip: some exceptions would be the Brazilian and French markets, so using Portuguese and French can work for you in the right niches).

What’s certainly true, however, is that you can’t expect a website to be a huge success and use spun content on it. As a matter of fact, most spun articles that link builders use are not ideal for tier 1 backlinks either.

Lack of Multimedia

Google loves pics, videos, and even audio, but that doesn’t tell the whole story.

The reason search engines prefer pages that are rich in multimedia content is because people love it. And if you monetize your website you need to make sure your visitors love staying on it.

Google have their ways of checking how much time visitors spend on your website. The Bing team, and others have explicitly claimed that if a user clicks the back button shortly after visiting your site, they too get a bad impression of your site. And would you blame them?

Engaging your visitors helps with both SEO and monetization, and it also brings visitors back to your website. There is no reason why you shouldn’t use multimedia to achieve this.

Thin Affiliate Sites

Ok, I was wondering until the very end whether to put this in.

The thing is, 5-page sites can work if they have enough content in the pages themselves and strong backlinks (typically blackhat) and you’ve found the golden niche where there’s money to be made and no competition to be found.

However these don’t last for long. Often times it’s long enough for you to make it a worthwhile investment, but I wouldn’t recommend this to a newcomer, as this fails more often than not, so you need the experience, financing and stubbornness of an experienced blackhat SEO to keep going and not get discouraged or run out of money for new sites (the general idea of this tactic is to have 1 site make up for the losses of tens of other sites).

If you are using Adsense to monetize your traffic, having multiple small websites is not something Google tolerates either. You’ll most likely get your money stolen by them (yes, at one point they will stop paying, even if you send the clicks) and your account shut down. Adding a new article to the sites at least every two weeks (ideally once a day) can save you from that.

And even if you’re not using Adsense, you’re probably trying to monetize your traffic with affiliate offers, and you’re out of luck there, too. Affiliates are Google’s competition, so the search giant can certainly resort to playing dirty.

“Thin affiliate websites” is a vague term, yet often used to rationalize penalties on webmasters by the web-search monopolist. If you don’t deliver fresh content to your website, you’re still in trouble – there’s no Adsense account for Google to shut down, but there is a website to punish – so you can kiss your profits goodbye.

Feeling depressed? Don’t worry, it’s my fault. I decided to put all the bad news into a single article. I promise you, it only gets better from this moment on – the law and punishment portion of this document is over.

SEO Content Basics

This chapter will serve as a crash course for all you folks who are just starting out.

While experience is the greatest teacher, when it comes to basics, a little bit of reading goes a long way. Instead of reinventing the wheel, here you can have all the information you need at your disposal.

By the end of this chapter you will have the right mindset before planning and releasing content for your website – thus avoiding any silly mistakes you would have made otherwise.

Getting Familiar with Google

Understanding how search engines work is the best place to start your learning journey. Since you are on your way to becoming a webmaster, you should think of Google as a long-term business partner of yours.

So, obviously, it makes perfect sense to know from the start what Google wants and needs from you – this way you can have a productive relationship.

What’s also cool is that what we are about to describe also applies to Bing and Yahoo!

So what do search engines do exactly?

The main goal of search engines is to realize exactly what their visitors are looking for, and give them the most relevant suggestions back.

Simultaneously, they have their so-called “spiders” that crawl all over the web, go through all accessible pages and try to understand what these pages are all about so that they can know if they are worth recommending for specific searches.

Sounds simple enough right? However, let’s focus on some of the keywords and examine why they are of importance.

Accessible Pages

The first part of the algorithm deals with finding the pages. Spiders work in the following manner: They start from some web page. Then they go through all of its content while simultaneously looking for links to other pages and placing them in a queue so that they can be examined later.

After the spider is done with the page, it picks one of the linked pages from the queue and goes through the same process, further filling the queue. With enough computing power you can browse a significant portion of the World Wide Web using this simple algorithm.

You’ll see why understanding exactly how these spiders work is important for you in the section about accessibility and website structure below.

Relevant Pages

The second step is about interpreting the individual pages. This is where your content matters the most.

In order for the Google algorithm to find a page on your website suitable to return for a specific query, it will need a reason to believe that your page is relevant.

There are multiple algorithms in place that judge your pages based on specific criteria. Most of the information from this moment on will be based exactly on how to convince Google that your page is not only relevant, but also the most appropriate result.

Knowing some of the algorithms can give you a head start, so read the following chapters carefully.

Now that you know what your two major concerns are, let’s see how to make the best out of this knowledge.

Issues with Accessibility

First of all, the pages must be accessible. By default they probably are. But people make many mistakes along the way that prevent Google spiders from reaching all web pages. Let’s see how to make sure this doesn’t happen to you.

Poor Website Structure

To brighten things up, let’s enjoy the picture below…

Thank you, thebiguglywebsite.com, for showing what type of garbage can rank #1 (although for “ugly website”).

While this is not the poor structure we’re going to talk about, it is just a beautiful thing to see, so enjoy it fully until we return to seriousnessland.

Since Google’s goal is to figure out which sites people find helpful, it really makes sense that they are trying to make their spiders think like humans.

So let me ask you this. If a human needs 10 clicks on your website to reach a page, what are the chances that he/she can find it?

It is really easy for web spiders to crawl every page on a website. However if Google decides that a page is hard to reach by humans, they will make it unfindable on search engine result pages (SERPs) too.

Let me give you yet another example – when you enter a library or a bookstore books are grouped by genre. Fantasy books are on one shelf, non-fiction books are standing on another…

It would be nearly impossible to find what you’re looking for if all the books are sorted randomly instead. And nobody would visit this library.

Websites shouldn’t be different – if yours looks like a mess, you can’t expect good rankings on any search engine.

If you overdo the “grouping by subcategory” you might hurt your rankings, too. Because as mentioned above, if a page is 10 clicks away from your homepage then it certainly looks low-priority as humanly possible.

Ideally, no page should be more than 3 – 4 clicks away from the homepage. Important pages (those that target your main keywords) should be 1 click away. End of story.

Modern Interactive Custom-Made Websites

If your plan is to use WordPress, Joomla or some other popular CMS, this doesn’t apply to you so feel free to skip the next few lines.

However if you’re having a website developed from scratch you can come by a few issues.

The modern web includes tons of interactive content, chat-popups, etc. And unfortunately, Google sucks at dealing with them. They are all based on JavaScript, and even in 2015 search engines struggle to comprehend what’s hiding behind the JavaScript, what the content there is related to and what user problems it can solve.

The solution is simple – talk to your web developers, explain that you want your content to be indexable and well-received by search engines. And if you insist on adding some interactivity here and there, make sure that no essential content is hidden behind it.

Ghost Pages

With the arrival of massively popular CMS (content management system) platforms like WordPress and Joomla, this hasn’t been a real concern for quite some time. Still in case you’re writing the HTML yourself, you will need to consider whether your page can be reached a.k.a. are there any links pointing towards it.

A link from a category page or another page on your website will do. WordPress will handle this automatically so I highly recommend using a CMS for any purpose other than learning HTML.

Robots.txt and .htaccess Files

This is too advanced for now, and not all that content-related either, but since we are on the topic of accessibility a sentence or two won’t hurt.

There are ways to configure your webserver to prevent robots from crawling it. This won’t happen by default but we have seen webmasters blocking Google for whatever reason. I can’t think of any reason other than a site administrator playing a dirty trick, honestly, but you need to be careful.

Article Structure

As previously mentioned, in order to decide to return a page as a relevant result for a query, search engines must understand what the page is about. So why not help them understand it better?

Previously we looked at website arrangement. Now let’s go deeper and touch on article design.

Headers

One of the most important hints that you can give Google about the topic of an article is the header. In html headers are represented as the following tags: <h1>, <h2>, <h3> all the way up to <h6>.

Despite the fact that you can put all these tags anywhere you want, h1 in the main header, h2-s should go below it and so on. Anything other than that screams poor content structure and makes Google lose trust in your ability to arrange a webpage.

Obviously, h1 tags are the most important, and your main keyword should absolutely be inserted there.

H2-s matter, too. Make sure, however, that you use them in a way that is the best for your readers. This means that if it doesn’t make sense to put one of your secondary keywords in an h2 – then so be it. Obviously, if it is suitable, that’s a plus.

H3 and below are certainly considered, too, but are not nearly as important. In fact I can’t see too many occasions where using these tags all the way up to h6 is a good idea.

Bold, Italics, etc

People hate reading big walls of monotonous text. One way to make your text content look more appealing and fun to read is by using bolded text or italics, or any other text modifier for that matter.

Placing important keywords in bold has always been considered helpful for your SEO efforts. While the effect is minor and hard to measure, it does make perfect sense.

However if it doesn’t make sense to put specific keywords in bold, then don’t. You’ll find that optimizing your text for human readers rather than Google spiders is more rewarding in the long run. Focus on making your content easier to consume by a person and you’re fine.

Bulleted and Numbered lists

Until recently, bulleted lists were confusing to Google. Not anymore. They make content pleasant to read – it only makes sense that search engines reward you for using them.

Besides, one of people’s favorite article types to read is the “Top 10 X” and “10 ways to X”. These are the perfect link-baits, and they will get you shares on social media, and your high rankings will soon follow.

And often times a short bullet-list contains all of the major keywords that an article is trying to rank – the Content pane at the top.

Optimizing Images

This one is up to you and not the article writers so read carefully.

What you should do is the following:

- Use the alt tag and place some relevant text. In the best case scenario your alt text would describe the image well, contain an important keyword that you want to rank for and be no more than 10-12 words long. Six and below is best.

- Don’t name your images 24098274_45383.jpg. Use the same principles as with the alt text.

- Size and place your images appropriately. People hate big walls of text, but having an enormous image somewhere in the middle of it can only make it look more obnoxious. You’ll get less links and worse ranking in return.

- Make sure your images are not enormous. Sometimes saving a jpeg as a 200kb and a 1mb file makes no noticeable difference in image quality. However the former can suck up a lot of traffic and more importantly – make the page load more slowly. This not only discourages readers, but gets you a small penalty by Google as well – you should always strive to make your websites load as quickly as possible.

Optimizing alt tags not only helps with rankings, but also shows blind visitors to your websites that you appreciate them and have not forgotten about them. Even without the Google-ranking-based motivation you should probably be a good person and add those anyway. Remember – showing respect for your visitors goes a long way.

And when it comes to SEO for images – it works in two ways.

First, it enhances the SEO of the current page.

Second, it can get the image itself ranked. Don’t underestimate image traffic, it brings more visitors than most people assume.

Never Overuse Small Articles

Sites used to rank with as little as 200 words of content per page. Simply put, this is really unlikely to happen nowadays, unless your site is the only one in the niche.

Instead, focus on at least 400-500 words of content for most articles.

For the few really big keywords that you would target, I’d highly recommend going for 5000 or even more.

A recent study on content length some time after the Penguin/Panda updates, which showed that most SERPs are comprised of 2000+word pages and the more words on the page the better. While it was probably fake, 2000+ does seem to hit a sweet spot for big keywords.

SEO Content Hacks

While the effect of a single detail can often be mistakenly recognized as rather small, striving for excellence pays off sooner rather than later.

In this chapter we will discuss multiple small details that make the difference and help your website become a favorite to readers and search engines alike.

Here are some tips/hacks in no specific order:

Use Keyword-Dense Phrases

Seems obvious, but let’s elaborate a little bit on that, because many seem to do this wrong.

We mentioned before that keywords stuffing is lethal and it shouldn’t be done. With hummingbird updates, the introduction of LSI and many other new aspects of the way Google interprets your content, this is not the only reason not to go for that.

In fact a better reason would be that the more synonyms or alternative wordings for a certain phrase you have, the better.

Post-Hummingbird you can rank for similar keywords without even having them in your article, which might lead you to the wrong conclusion that using synonyms is not that important anymore but it’s the exact opposite.

You never know which words Google interprets as similar.

If there are multiple keywords that are related to one another in the same article, for Google this means that this article is of a good quality and hand-written.

At the same time the more similar words you have, the more starting points you’re providing for Google to start looking for newer synonyms (that you might not have thought of) – so the total number of keywords you’re targeting is growing at a rate that is not even linear – it’s exponential.

Exploit the Freshness Factor

Not too long ago, Microsoft were laughed at because of a Bing-related failure.

The same day they released the new version of their own gaming console – the Xbox – searching for it on Bing images would only return pictures of their older Xbox version.

Not in Google though. Even though they are competitors and not the company to release the product, Google images would not show an obsolete image on the first 10 or so pages.

While this is certainly a big win for Google and a catastrophic failure for Microsoft, if you think very carefully you might notice a little problem with that.

The problem is that Google are overzealously promoting newer content instead of somewhat older articles. What does that mean for you?

It means that if you’re looking to just now enter a niche you have a big head start in front of your competitors. Older domains bring authority, but fresh ones top that – the only thing you need to do to take full advantage is to start with at least 50 brand new articles in the same niches as your competitors.

Then publish at least 2 a week on the same weekdays. Seven might be better. The bolded part is very important if you want old visitors to come back to the website – when people know that you’ll give them new content on Wednesdays and Sundays for example, they will not need to use Google to find your website – they will just write the URL down. You’ll be shocked at the efficiency of this simple idea.

My advice is to have at least two weeks’ worth of articles prepared in advance – and use the scheduling functionality of your CMS in order to set the exact time at which they will be published. This way you won’t miss an article due to unforeseen circumstances, and it also means less time working on this website, and more free time for other productive activities.

This strategy goes very well with another one – which would be …

Steal your Competitor’s Keywords and Rankings

And there’s plenty of free ways to do this. Just browsing through their articles and stealing the H1-s would be a good enough approach. But there’s ways to build upon that.

The Google Keyword Planner was never designed to help SEO-s but it does a pretty good job at it. If you didn’t know, it’s willing to give you additional keywords that it believes are relevant. There are good reasons to believe that those come from their LSI-algorithm – some good info on that is coming later.

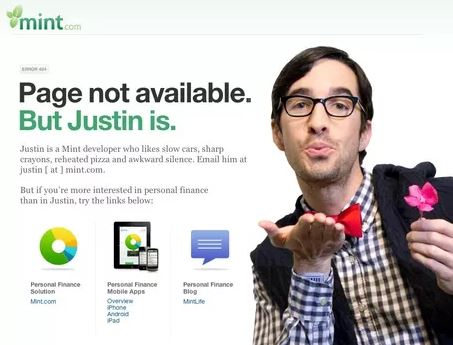

404 Pages

Ideally you should never have outbound links that lead to nowhere (HTTP 404 error). But what’s even worse is that backlinks pointing to your website lead the user to a 404-error page.

The former, Google takes as a signal that your website is obsolete and for a good reason. Your rankings will suffer because of it. Luckily though, it is really simple to circumvent if you just regularly use the Xenu crawling tool on your website. Xenu is a free (open source) piece of software that acts just like a Google crawler, except it stays on your website. It can easily find both expired links pointing to other websites, or (God forbid) such pointing towards now-missing pages on your own site.

This is website maintenance 101 and it should be part of your basic SEO hygiene.

The actual trickery however comes with the 404 errors on your own website. If, for some reason, there are links on other people’s websites, pointing towards pages on yours that no longer exist, you’re in trouble.

Luckily Google bots only identify actual 404 http errors. While we’ll not dive into the HTTP protocol and programming here, we want you to know that it is possible to lead the user to a beautifully crafted page, that says “404 error” without actually having the server respond with a 404 error on the HTTP-level – that’s right, the server returns 200 (as in “everything is ok”) yet your visitor knows that the page is no longer there. Your IT guys will surely know how to accomplish that, and even simpler – there’s CMS plugins for that.

Depending on the CMS you’ve chosen you can easily find one in their respective add-on stores just by searching for “404”.

Examples of awesome 404 pages can be seen below…

And the blackhat part of this tip would be – instead of showing a pretty 404 message, automatically redirect your visitors to the pages that you’d expect make you profit most.

It’s unlikely that your visitors would be flattered by that, but it doesn’t look nearly as ugly as a default 404 page, and it can bring more leads, sales… it’s a win-win regardless.

Encourage Social Sharing

Let’s not confuse on-site and off-site SEO with content creation/management and backlinking respectively. There is a clear intersection and this is a big portion of it.

Social shares are not only important, they are a big investment for the future when they will be even more important. Not to mention the non-Google traffic they can give you.

So what can you do to your content to get more social shares? Well, this depends on how far you want to push it. Let’s list the main strategies:

Politely Asking for Shares

Just add a sentence at the bottom of your page saying “If you liked this article please use the social buttons below” or something like that.

This is better than nothing, but it is not ideal. In case you really want to be unobtrusive to your visitors it is probably the best strategy – nobody would be annoyed by your kind request.

One thing you can do to enhance the efficiency of this strategy would be to add another one or two sentences describing how much social sharing means to you and how it is the thing that makes you continue producing quality content.

When you actually do this instead of placing a generic sentence at the bottom you can expect more than double the shares you’d normally get. Still there’s many ways to improve upon this.

“Please share” Pop-Ups

Sharing popups – yes these are disgusting and I agree. Having a “Like us on Facebook” window pop unexpectedly makes your visitors feel like they are being punched. And even if you get the likes and followers, there is much to lose, too. But…

This simply means that most people are doing it wrong, so you don’t have a good example. Let’s freshen up this idea – because it is legitimately possible to reach the effectiveness of the pop-up approach without frustrating the people kind enough to visit your website anyway.

Have your developers (or use some code from the web) create something like an animated character in a place on your page where there’s no content to hide. Draw a comic-like speech balloon with something that draws attention (a simple “Psst” would suffice). Only after the user clicks on it would a request for a Facebook/Twitter follow or like appear – and in the perfect scenario it would be worded in a mannerly fashion.

Obviously what we just described is one example – you can get creative (and you probably should). The main difference to this approach is that the social share request does not catch the visitor off-guard and does not cause the tiniest bit of stress. The user knows that he/she caused the pop-up to appear so they won’t think negatively of your website.

It’s a small subconscious thing, but it makes a huge difference and should be kept in mind.

Or, if you really want maximum shares, there’s an even better solution. Let’s introduce the highest-risk-highest-efficiency strategy…

Content Lockers

Have you ever seen these before? If you haven’t, all they are is a box of different social sharing options, that once activated would hide and reveal some part of the content that was previously hidden.

Now there’s a golden rule you need to follow so that you will get the all-so-needed social shares without frustrating your visitors:

Never, by any means, hide essential parts of the content there. If the user can’t immediately see what he/she clicked on your article for, this sends a bad signal.

Ideally what you should be doing is giving something extra in exchange for their troubles. Obviously that “extra” can vary from website to website so we will leave the creative part to you – that’s the fun part anyway, isn’t it?

Write Custom Meta Descriptions

This has always been a great idea, but for different reasons.

Years ago metadata would have been a major SEO ranking factor. Obviously, it stopped being one once people started abusing it heavily.

Then for years it meant nothing for SEO, but your meta description would still show up on the SERPs once you ranked so writing click-bait meta descriptions would get you more visitors than the default text that would appear.

Nowadays, we have good reason to believe that Google borrowed a ranking factor from Bing which would bring the meta descriptions back in the game.

Imagine for a second that you are Google. There is this SERP of yours that you ranked based on a well thought out algorithm.

Yet every time someone sees it, they click on the second link instead of the first one.

What would you think?

Well, only recently Google decided that this is a meaningful factor and if Google-visitors prioritize a lower-ranked link over those above it, then it is probably a better match for the search term.

With catchier meta descriptions you can achieve that. So not only would your visitor stats skyrocket, but so would your rankings – leading once again to even bigger increases in visitors. It’s a win-win-win situation, so to speak.

Following all these on-site strategies would certainly contribute hugely to your success. But the fun part has just began.

Introducing the cherry on top…

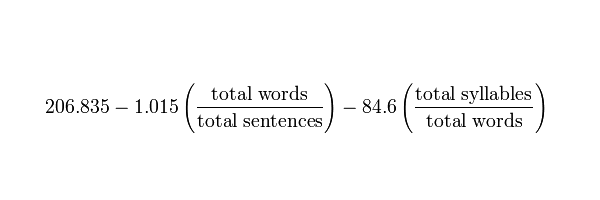

Use Contextual Links in Content with High Flesch-Kincaid Score

Flesch-Kincaid formula:

It has been a while since Penguin 2.1 struck, yet here most SEOs are, doing the same mistakes over and over again.

Many have claimed that Penguin 2.1 did that to GSA SER and to tiered link building what the previous penguins did to Senuke and Ultimate Demon.

Except it didn’t. Building tiered links still works, but only for those few (and we mean really few) who know how to do it properly.

The problem is that most people who use automated software to build their tiers do whatever worked two years ago and expect it to work again. Well, it’s no wonder they get frustrated and give up afterwards. But it’s all their fault.

And the problem is not the links themselves. It is the content that they are put in. Yes, content matters for link building as well and this book isn’t just about on-page SEO.

It is about the King of off-site SEO. Which is still… yeah…

You see, the problem is that Google can tell with astonishing accuracy whether you used unreadable spun content for your tiers or not. But this is not due to a groundbreaking discovery in artificial intelligence.

It is due to a simple algorithm. A well-financed and highly professional reverse engineering study recently showed an almost 99% correlation between SERP changes during the Penguin 2.1 hit and the idea that Google decide whether content is spun based on the Flesch-Kincaid score.

Flesch-Kincaid is an algorithm that determines how easy a piece of content is to read. It does that by taking into account a few metrics – the average number of syllables in the words, the average number of words in a sentence and a few others.

What this means is that spun content typically gets extremely low scores when this algorithm is applied (meaning that the content is hard to read). Being hard to read is not always a good thing, but if 100% of your backlinks are placed in content that looks as if it is designed for fifty-year-olds then there’s probably something fishy going on.

Unfortunately the spun content most people use post-Penguin 2.1 is the same garbage, meaning that Google are quick to catch on to that and punish the website owner.

But this doesn’t mean that using spun content wouldn’t rank you. It just means that you should go around Google’s algorithm by providing high-scoring content. Easy, right?

Obviously you can achieve the high Flesch-Kincaid scores yourself if you believe you can afford the time and effort required. As previously mentioned, this would mean that you should aim to make your spun content have bigger sentences and use rarer and longer words. While it is an extremely daunting task, you can see that it is really simple to explain.

LSI and the Future

This chapter will serve as your introduction to LSI – the algorithm that Google has already started using and aims to improve on and increase the significance of in the future.

So what exactly is LSI? Well, the acronym stands for Latent Semantic Indexing and overall, it is a big leap ahead from using just keyword density as a means to determining content relevance.

Instead of giving you a boring definition, I’ll use an example.

An article containing “Google” in the header would obviously be about the company Google.

An article containing “Microsoft” in the header would obviously be about the company Microsoft.

An article containing “Apple” in the header… might as well be about apples.

Words have different meanings, as you all know well. But how would a simple keyword-density checker determine the exact meaning of a word? Well, it can’t.

But seeing words and such as “iPhone”, “iPad”, “technology”, “new release” can point the algorithm in the right direction – and that’s what LSI is all about.

But just determining the exact meaning of a word based on the context it’s in is just a small bit of what LSI can be used for.

Googling “cool race to watch” won’t bring an article containing the words “cool”, “race” and “watch”. The first result as of the time of writing this ebook is about F1, the second is about NASCAR – and these are the main keywords there. Yet they are nowhere to be found in my query. And the second article contains neither of the words “cool” and “race”. This is all thanks to LSI.

What Does All this Mean for You?

Well, first of all it means that focusing on keyword density and exact keywords does not appear all that appealing anymore. But I beg to differ.

Our continuous experiments have shown that instead, we have in our hands a new era of keyword stuffing. But instead of using the same keywords over and over, we’re using the Keyword planner to determine which words Google thinks are the closest to those we are trying to rank for. And we’re putting them in there.

Essentially the keyword density at the end of this would be as natural as possible, yet the effect would be similar to a 2001 keyword stuffing – top positions.

We are going for rank 1! Are you coming?