Ultimate GSA SER Site Lists Building – Step By Step Tutorial

It’s very simple – if you have great site lists, you will have great results from GSA Search Engine Ranker. Now, I already covered a few techniques on how to build your own site lists in the ultimate GSA SER tutorial, but I feel like there’s much more to say about that. So let’s say it.

What You’ll Learn

- What GSA SER site lists are – many people, especially beginners with this software, do not understand what its link lists are and how they can use them, which is knowledge of vital importance.

- How you will get your seed verified target URLs list – those will be the first URLs you will build backlinks on and add to your GSA SER verified link lists.

- Growing your verified site lists – once you acquire some verified link lists from your seed target URLs, you will grow them exponentially using a couple of techniques I will share with you.

- Filtering your GSA SER site lists – if you wish to build separate site lists for different purposes i.e. some for high quality link building, others for lower tiers, you need to pre-filter them and this section will teach you how and what filters it is good to use.

- Keeping your link lists clean – you don’t want to clutter them as this will lower VpM and overall effectiveness of Search Engine Ranker.

- Wrapping it up – in the end…

What Are These GSA SER Site Lists?

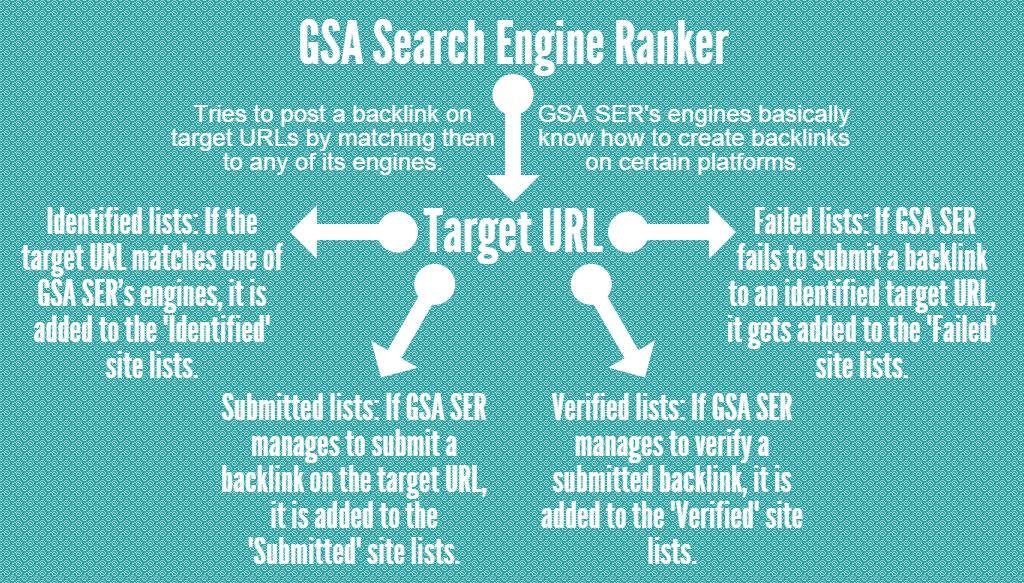

Basically, the site lists of GSA SER keep a set of URLs. Here is a graphic that portraits all four of this SEO tool’s link lists and what they are used for:

Now, as you can see, we have 4 different site lists:

- Identified – target URLs which match any of GSA SER’s engines are stored here.

- Submitted – target URLs to which the software successfully managed to submit a backlink are stored here.

- Verified – submitted backlinks which are later checked and turn out to be live and working are stored here.

- Failed – target URLs which are identified but GSA SER fails to submit a backlink to them for whatever reason are stored here.

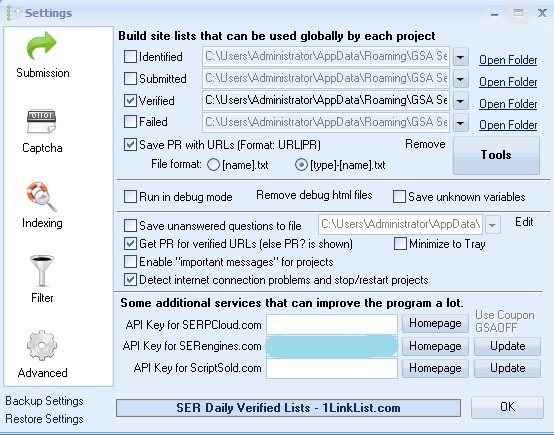

It is important to note here that if these site lists are not enabled via the “Options” -> “Advanced” menu of GSA SER, no URLs will be stored in their respective folders. So for example:

On this GSA SER instance, I have enabled only the “Verified” site lists which means that only that folder will be filled with target URLs. Generally, you’d want to save only verified URLs, because they are the ones that actually count.

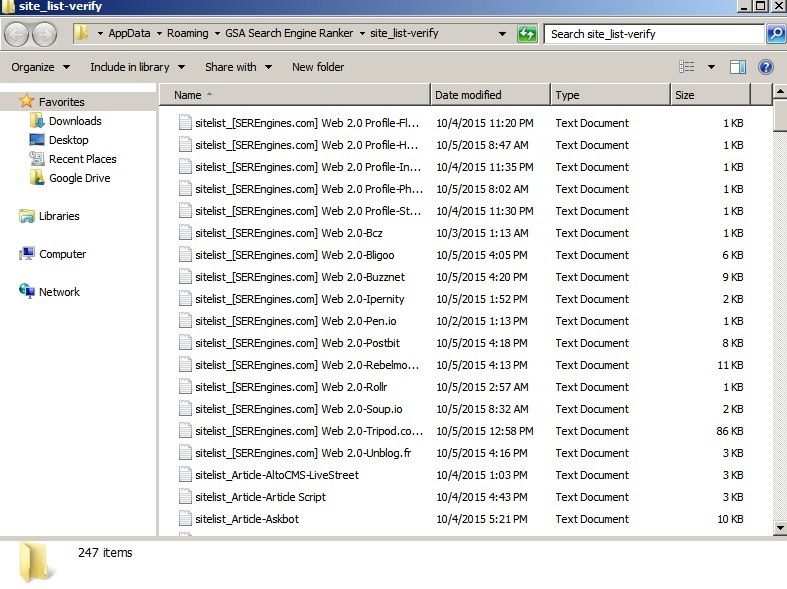

Each of these site lists has a folder in which they are stored. Each of said folders have the following structure:

So you have “sitelist” which is just a prefix, then you have the engine group i.e. “[SEREngines.com] Web 2.0”, “Article”, etc, and finally you have the individual engine/platform name i.e. “AltoCMS-LiveStreet”, “Askbot”, “Buzznet”, etc. So when GSA SER manages to verify a backlink on, say, a website that matches its “Askbot” engine, that same verified URL gets added to the “sitelist_Article-Askbot” file. And that’s basically how GSA SER site lists work.

Why do we need to store these verified backlinks? Quite simply because later they can be used by other GSA Search Engine Ranker projects for a much higher success rate, since we already know that, at some point, a GSA SER project managed to create a backlink on that target URL.

So a great set of verified link lists means high VpM, good submitted to verified links ratio, and an overall more effective link building campaigns.

Creating the Seed Target URLs List

Now, when you first startup GSA Search Engine Ranker, the verified site lists folder will be completely empty. That leaves you with two options:

- Buy GSA SER site lists – if you don’t have the time, you can simply purchase fresh lists from one of the many link lists providers. If you don’t know which ones are good, check out this case study or take a look at our GSA SER verified site lists of the Gods.

- Create your own site lists – if you do have the time, this is the better option, because you know that the lists you use will be unique and different from the link lists of other GSA Search Engine Ranker users.

For people who don’t have the time to scrape initially for target URLs, I have prepared 719,245 identified target URLs which you can simply download and import into your own GSA SER. Then simply run your verified links builder projects (configure them to use the “Identified” site lists) to verify as many of these identified target URLs as possible, which will be a lot since I have gathered them using a strategy that gives them a high chance to succeed in GSA Search Engine Ranker. If you choose that option, you can simply skip to the “Growing Your Verified Site Lists” section unless you are still interested in learning the entire process. Here’s the list:

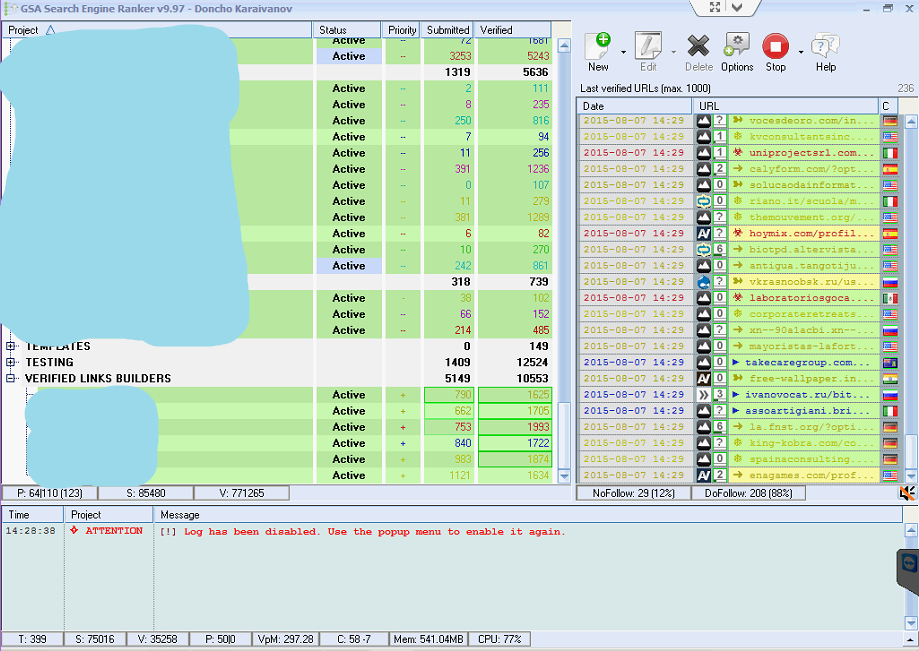

Just as a quick preview, let me show you a recent record VpM I achieved on one of our servers:

That’s just a little less than 5 verified backlinks per second. Of course, I managed to keep this just for a few minutes before it got stabilized at around 150, but still it was awesome. So, the techniques I am about to teach you really do work and you will be amazed at the results you get from your lists. Now let’s gather some seed target URLs.

You will need either Scrapebox or GScraper – take your pick. I will use Scrapebox for this tutorial just because I’m more used to it. Now start it up and then divert your attention to GSA SER.

Getting Footprints For The Scraping Process

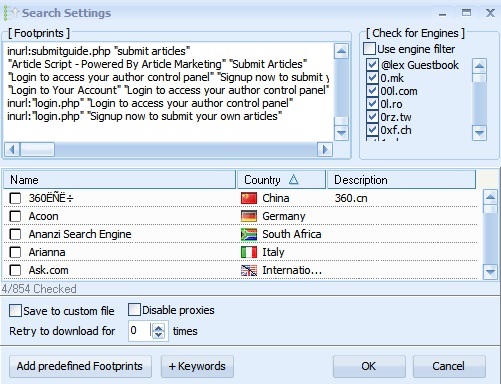

Click “Options” -> “Advanced” -> “Tools” -> “Search Online for URLs”. Then use the “Add predefined Footprints” button and add all the footprints for all the engines you plan to use – they will start appearing in the “Footprints” field. For those unaware, a footprint is basically some text that is found on websites using the same platforms.

For example, forums created using the vBulletin platform will have (if the owner of the forum didn’t remove it manually), a “Powered By vBulletin” text in the footer. So, if you scrape on search engines for the keyword “Powered By vBulletin”, you will get, in the SERPs, all sites containing this text which have a high chance to match GSA SER’s vBulletin engine. Once you are done adding all the footprints you want, select all and copy paste them into a file named “GSA SER footprints”:

Now when it comes to footprints, there are also other sources where you can find even more footprints, because the ones provided in GSA SER are most probably used by all other users of the tool and you want to be exclusive.

So, you can either get some custom footprints from this thread and/or you can use Footprint Factory. These will both provide you with even more footprints which most people probably don’t use when scraping for target URLs.

Scraping For Target URLs

For this tutorial, I will just use the ones from GSA SER. Now, you can merge them with some keywords if you need more niche targeted URLs to be scraped, but I will import just the footprints in Scrapebox and let it run like that – without any keywords.

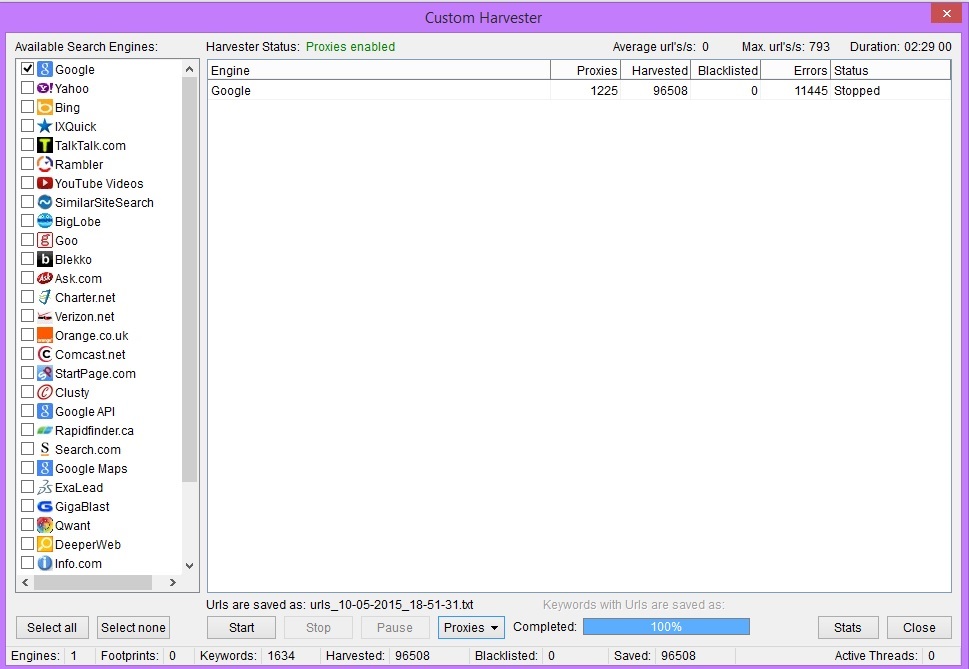

For the scraping process, you can avoid buying proxies since the new version of Scrapebox comes with user server proxies, which actually work great. From the “Custom Harvester”, you simply click “Proxies” and then tick the “User server proxies” option and you are good to go.

With the server proxies of Scrapebox, I start at around 600 URLs/s at 50 connections which is awesome, but in a few minutes, these drop down a lot and get stabilized at 10 – 20 URLs/s. But still, this is pretty good for free proxies. If you want to save some time, you are going to have to either buy private proxies (I prefer BuyProxies) or use GSA Proxy Scraper (our tutorial and honest review).

While Scrapebox is doing its thing, we will prepare a few GSA SER projects which will act as verified links builders – they will basically try and post backlinks on the target URLs from Scrapebox.

Setting Up The GSA SER Projects That Will Build The Verified Site Lists

Simply click the “New” button from GSA SER and start creating the project with the following configuration:

- Content – first of all you will need to fill the content of the project. I use a combination of Kontent Machine (our tutorial and honest review) and a content spinner – can be WordAI, Spin Rewriter, The Best Spinner, etc (check out this case study of the top 5 content spinners if you don’t know which one to choose).

- Proxies – you will need dedicated proxies if you ever wish to reach high VpM with GSA SER. I use the ones provided by BuyProxies (our tutorial and honest review), but that’s up to you to choose the ones you like.

- Anchors distribution – since this will be a project with the sole purpose of building verified links, you can do whatever you like in terms of anchors. Test out some distributions on some site you don’t care about for example – it’s what I do.

- Engines – I select the following engine groups for my verified links builders projects:

- Article

- Blog Comment

- Directory

- Document Sharing

- Forum

- Guestbook

- Image Comment

- Indexer

- Microblog

- Social Bookmark

- Social Network

- Trackback

- URL Shortener

- Wiki

- Article – options include:

- Just a link at a random location.

- 0 – 1 authority backlinks.

- 0 – 1 images.

- 0 – 1 videos.

- The option “Do not submit same article more than x times” is disabled.

- Options – this is where most of the magic happens:

- If a form can’t be filled is set to “Choose Random”.

- “Continuously try to post to a site even if failed before” is enabled, which basically allows the project to not produce “already parsed” messages.

- No search engines are used.

- No site lists are used – we will import the target URLs directly into the projects.

- “Use URLs linking on same verified URLs” is enabled.

- Scheduled posting is allowed – 5 accounts with 5 posts per account. Why allowed? Because sometimes if I let it just build unique domains, GSA SER might fail a couple of times before managing to create a backlink on a certain site. The scheduled posting allows it to try more than once to get the job done. Later, I simply de-dupe the verified site lists and we are left with only unique targets.

- Filters – I only select all types of backlinks to create and do not activate any other filters. We will talk about filtering your site lists a little later.

- Emails – 5 fresh ones per project. I use Yahoo mostly because they seem to work great with this software.

That’s basically the setup of a verified links builder project. Now, duplicate it 4 times to make a total of 5 projects and then import 25 emails (5 per project as we mentioned). You can go the extra mile and add different content for each of the duplicated projects, but that’s not mandatory.

Creating the Seed Verified Site Lists

At this point, Scrapebox should either be done or close to finishing depending on the number of keywords you imported. I got close to 100k target URLs which is enough to get me a good amount of seed verified site lists:

Now select all the 5 GSA SER verified links builders, right-click -> “Import Target URLs” -> “From File”, and select the file from Scrapebox’s “Harvester_Sessions” folder that represents the above scrape. When asked, randomize the lines and split the target URLs equally between the selected projects.

Notice that I haven’t de-duped the target URLs from scrapebox – neither duplicate URLs have been removed, nor duplicate domains. And that’s how we want it because one project might fail to post to a target URL, but another one might succeed. We de-dupe only the verified site lists.

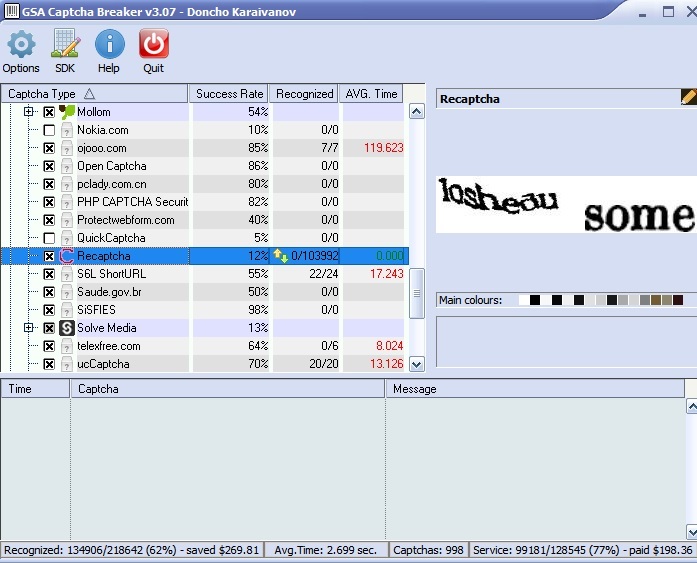

Now, at this point, the seed verified site lists are ready to be built. Your “Verified” site lists folder is still empty if you just installed GSA SER, but not for long. Before you start the 5 projects, make sure you have set up a captcha solving software. GSA Captcha Breaker (our tutorial and honest review) is a must and I strongly recommend a second captcha solving service to take care of hard-to-solve captchas and here’s why:

So, we have 218,642 captchas handled by GSA CB out of which 134,906 were recognized. As you can see, I have set reCAPTCHA to go straight to the secondary captcha solving service (ReverseProxies OCR in our case) since GSA CB has a very low success rate with it (just 12%), and a total of 103,992 captchas were sent to it.

So, we have a total of 218,642 (captchas handled by GSA CB) + 128,545 (captchas handled by ReverseProxies OCR) = 347,187 total captchas handled. Out of those, 103,992 are reCAPTCHA as we see, which is close to 30% of all captchas handled. Without a secondary captcha solving service, you would not get the links from all these sites – and they are the good and more quality ones.

Now that we got that out of the way, it is time to build the seed verified site lists. Set the threads to 7 – 10 per proxy or less if you are not using private proxies, and start the projects.

Growing Your Verified Site Lists

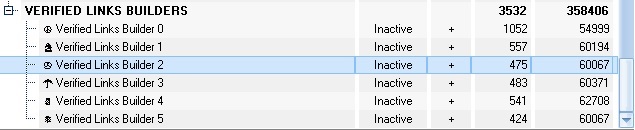

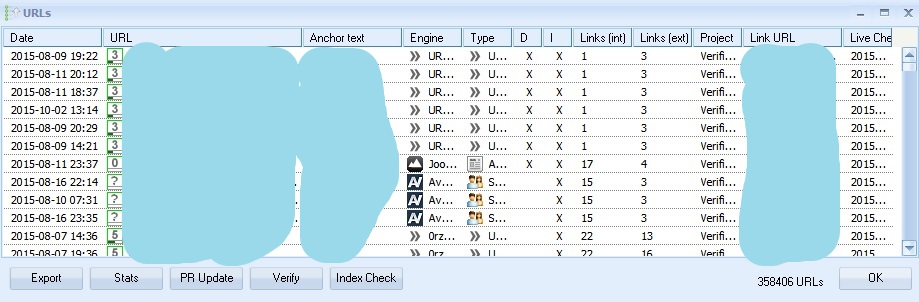

When the 5 GSA SER projects go through all the target URLs you imported into them, which you can check by looking at their remaining target URLs, you will be looking at something like this:

Now, these 358,406 verified URLs (these have been built over the past week, not just from this example) in my case are the seed list. And on this seed list, we will apply two strategies which will grow it exponentially.

Strategy Number 1

The concept of strategy number 1 is the following: Our verified links builders have surely created a lot of blog and guestbook comments on a lot of different unique URLs. Now on each of these URLs, there are (most probably) other comments by other people as well. But some/most of them are placed by other GSA SER users.

And their comments will link to their upper tiers. So, for example, if we use blog comments only in Tier 3, and so do another 100 GSA SER users, we can find their Tier 3 backlinks, which are on the same page as our Tier 3 backlinks, extract the external URLs and now we have their Tier 2 backlinks.

And those Tier 2 backlinks, when imported as target URLs into our verified links builders have a very high chance to be matched by a GSA SER engine, which will submit and then ultimately, verify a backlink on those sites.

In a sense, we are finding where other people using this software have posted backlinks and then we post backlinks there as well to get in on all the fun. So, how do you find these target URLs?

The first thing you do is select all the verified links builders and then right-click -> “Show URLs” -> “Verified”:

Here are all the 348,406 seed verified URLs. Now, we don’t need all of them as we said, but only the ones that possibly have a huge amount of external links on them – blog comments, guestbook comments, and you can also include image comments. So, right-click anywhere in the table -> “Select” -> “Engine Type”.

Then uncheck all engine groups which you don’t need, leaving only the aforementioned. This will mark from the table of verified backlinks, only the ones that match the engine groups you selected. Now click “Export” -> “Selected (Format: URL only)” and name the file “Seed Verified URLs Strategy 1”.

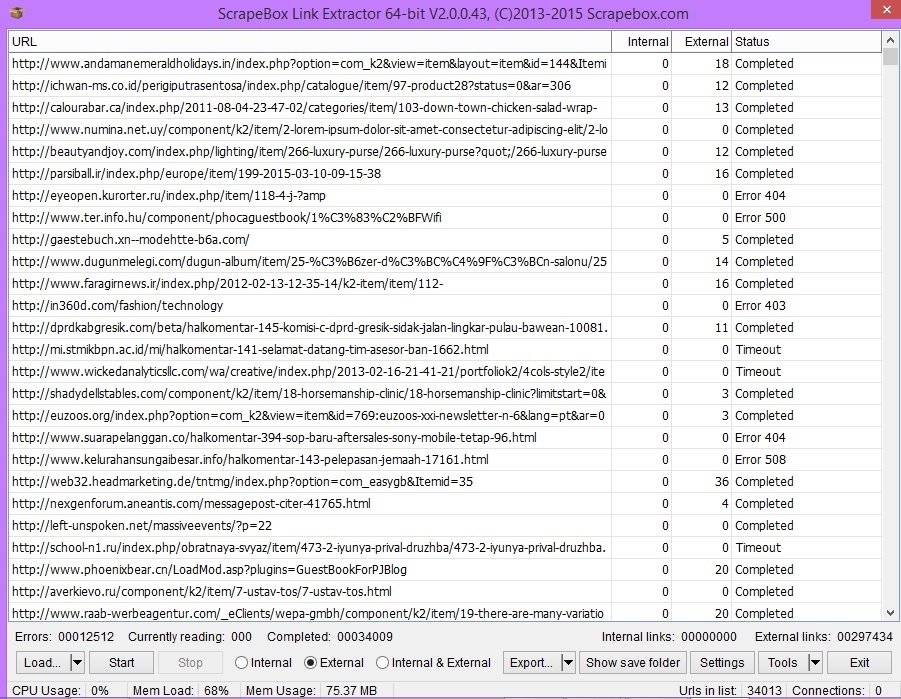

Now switch back to Scrapebox and open the “Link Extractor” addon. Load the “Seed Verified URLs Strategy 1” file and make sure only the “External” radio button is selected. Play with the number of connections – I set it to 50 and click “Start”.

This will basically find and save all external backlinks on each of the URLs we imported. Remember, those external URLs are (most probably) other GSA SER users’ upper tier backlinks.

Once the Link Extractor finishes, switch back to GSA SER and do the following: Select all verified links builders and then right-click -> “Modify Project” -> “Delete Target URL Cache”. While all 5 projects are still selected, right-click again -> “Modify Project” -> “Delete Target URL History” – when prompted if you want to remove “already parsed” messages click “Yes”, but when prompted whether you want to delete all account data, click “No”.

These two actions will basically clear the projects of any remaining target URLs and remove their history making them ready for new target URLs to import and post backlinks on:

This is how the finished Link Extractor looks like and as you can see, we now have a new set of 297,434 target URLs. Scrapebox saves them automatically in the “Addon_Sessions/LinkExtractorData” folder so that is where you can find them.

Now, again just like before, select all 5 verified links builders, right-click -> “Import Target URLs” -> “From File” and then select the file from the “LinkExtractorData” folder. Randomize the list and split the target URLs equally between the GSA SER projects.

Then simply start the verified links builders again and watch the VpM jump and the verified links get built. Now while GSA SER site lists building strategy number 1 is at play, we can begin preparing the stage for strategy number 2.

Strategy Number 2

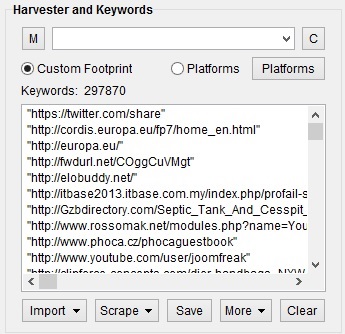

The second strategy is even simpler. What we are going to do is simply take each of these 297,434 URLs the Link Extractor of Scrapebox found, surround them with quotes and then scrape on Google using them as keywords. Why?

Because that way, we are going to find the lower tiers backlinks of other GSA Search Engine Ranker users. If you understood growing site lists strategy number 1, you know that we have currently set up our verified links builders to create backlinks on other GSA SER users’ verified URLs. Well, as you might have guessed, there are (most probably) more than one lower tiered backlinks pointing to every upper tier backlink and we want to find those as well.

Scraping on search engines using these 297,434 target URLs surrounded in quotes will return, in the SERPs, all backlinks that point to the URL we performed a search for. And there’s a good chance these could also be added to our GSA SER verified site lists.

So, simply import the file with the 297,434 URLs that the Link Extractor created into Scrapebox and merge with a file containing the following text: “%kw%” (with the quotes), which will basically surround all URLs with quotes:

Then simply start the scraping process. If you let it go till the end, you will end up with multiple millions of target URLs all of which have a good chance to get verified in GSA SER. When Scrapebox finishes or you stop it manually, you simply delete target URL cache and target URL history of the verified links builders again and you import the harvested URLs.

You don’t even need to go back to creating a seed list again anymore unless you want to use some specific keywords in combination with the footprints. You can simply keep on doing strategy 1 and then strategy 2 until your verified site lists go into the millions. So what do you do when they become extremely big?

Filtering GSA SER Verified Site Lists

Once you get a little bit more into GSA Search Engine Ranker, you are going to want to start creating separate site lists for different projects – just like SeRocket's EDU and GOV only lists, GSA AAM's reCAPTCHA and Text Captcha lists, and GSA Verified Targets' low OBL and high DA and PA lists. So, I am going to list parameters by which it is good and reliable to filter lists and how you can do it.

Of course, whenever you will be creating new site lists, you will need to archive the ones already existing in the “Verified” folder of GSA SER and make it completely empty. Just don’t forget that or you will mix the lists. Now, you have two options when creating new site lists:

- You can build them from scratch – scrape brand new target URLs using unique keywords + footprints and then employ strategy 1 and 2 on the seed verified site lists.

- Filtering the already built verified site lists – you can save some time by simply separating a sub-set of your already built link lists.

Filtering By PR And OBL

This one is obvious. Although PR is not 100% reliable nowadays, it is still an indicator. However it is a double-sided blade, because if you filter by PR, you might be missing out on some great backlinks from websites with unknown PR which are actually quality, but haven’t had their PageRank publicly updated yet.

The best way to create site lists with high PR is to simply activate the PR filter on your GSA SER verified links builders – for example, a PR3+ is a good start. Also, don’t forget to enable the “Skip Unknown PR” option. You can also add an OBL limit to make the projects skip heavily spammed blog comments and similar engine groups to further increase the quality of the site lists.

Filtering By Moz Metrics

We already showed how you can pre-filter GSA SER site lists by Moz PA and DA in this tutorial, but basically, you have two options. You can get an API key from Moz and then use Scrapebox’s Page Authority addon. Prepare to wait a lot if you are using the free API provided by Moz, because it has a 10 second wait time between each request.

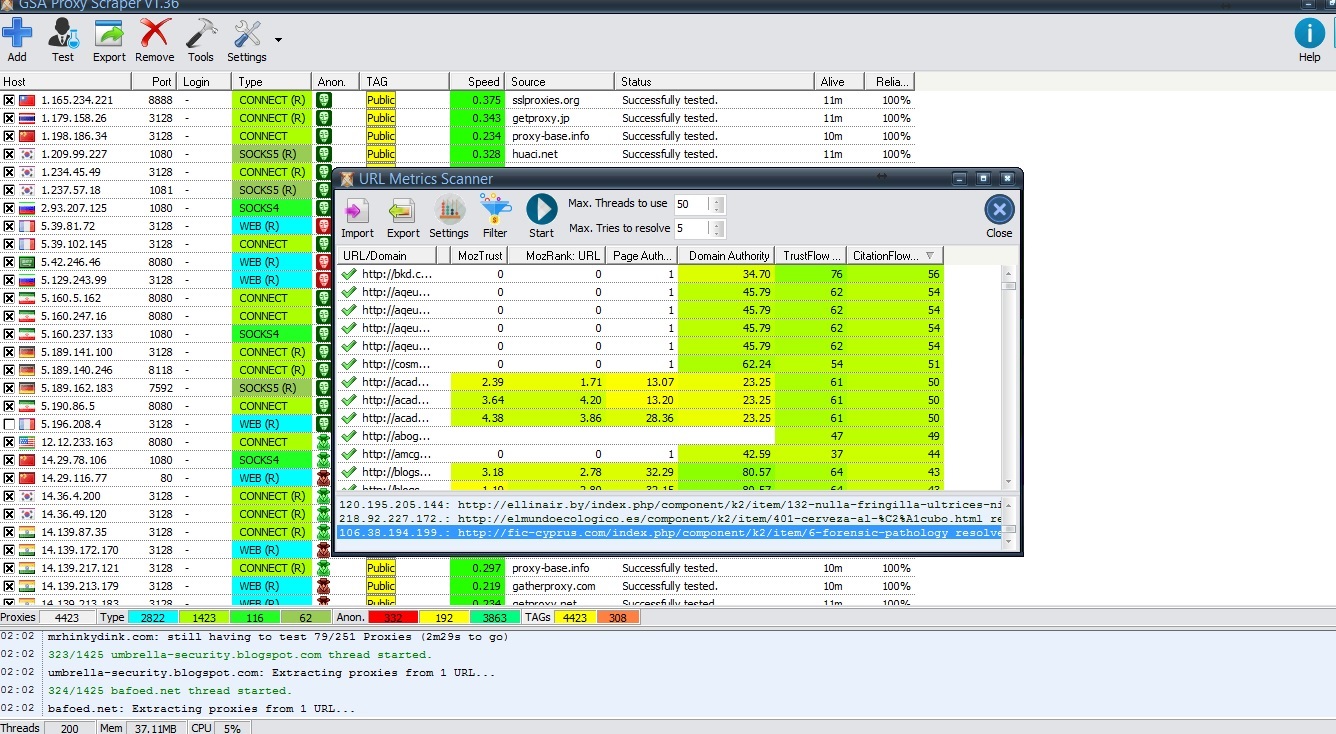

However, with the new URL metrics scanner of GSA Proxy Scraper, you can now check Moz stats without the need of a Moz account and I will show how in a second.

Filtering By Majestic Metrics

This is where it gets tricky. Since Majestic doesn’t have a free API that allows unlimited requests like Moz, you cannot check CF and TF of your target URLs with their free account. It allows you to check like 10 websites per day and that’s it.

However, since September 25, you can use GSA Proxy Scraper which now has a free URL metrics scanner tool. And the best part? It doesn’t require any accounts to be created on any of the third party tools it uses and will return CF and TF of the URLs you want it to scan without requiring you to spend some bucks on a Majestic subscription. All it needs is a bunch of proxies which it, well, provides:

As you can see, you can get both metrics from Moz and Majestic for free – no signups no nothing. The process takes quite a while of course, depending on the number of URLs you want to scan, but hats off to the GSA guys for providing this functionality.

When the URL metrics scanner tool finishes, you can simply use the “Filter” button to export only the URLs you want i.e. with TF higher than 20 and CF higher than 20, etc. Then you import those target URLs into your verified links builders and voila – you will build backlinks only on websites with TF and CF above 20.

Filtering By Engine Types

Another great filter you can use is GSA SER engines. You can simply separate your contextual from non-contextual verified site lists. This will increase VpM of your projects if you are usually separating them into contextuals and non-contextuals. You can easily build such link lists by selecting the engines allowed in the verified links builders.

Keeping Your GSA SER Verified Site Lists Clean

Just building your link lists won’t cut it. You need to keep them clean as a whistle. Why? Because otherwise, they will get cluttered with too much duplicate targets and old no longer working domains that your VpM will drop to single digits.

This part is actually pretty simple. You basically, need to do two things:

- Daily – remove duplicate domains and duplicate URLs from the site lists.

- Monthly – use the “Clean-Up (Check and Remove none working)” functionality which will remove all of the target URLs from your site lists that no longer match any GSA SER engine. Websites start failing as time goes by for a number of reasons – manual changes, deletion of site, etc. Those need to be cleaned regularly in order for the lists to perform at their maximum potential.

The cleaning up process can take up to a full day or even more depending on the size of your lists but it’s well worth it. Another option is to basically delete your site lists if you consider them to be too old and start at the beginning – create new seed verified site lists and then apply strategy 1 and 2 on them. This will give you brand new fresh GSA SER link lists which will skyrocket the effectiveness of your projects.

Wrapping It Up

As you saw, creating your own site lists is no child’s play. It requires a lot of time and effort and a significant amount of resources – proxies, captcha solving software, etc. While it is the preferred way when it comes to attaining link lists, if you don’t have the time, you can always take advantage of the services of a good GSA SER site lists provider.

Hopefully this tutorial covered everything you wanted and needed to know about building and managing your own GSA SER site lists. Now, all you have to do is never stop utilizing the strategies and techniques I shared with you here and you will feel the pleasure brought to you by effective GSA Search Engine Ranker link building.

![Optimal GSA SER Configuration Tutorial [Updated Regularly] Optimal GSA SER Configuration Tutorial [Updated Regularly]](https://www.inetsolutions.org/wp-content/uploads/2016/07/Optimal-GSA-SER-Configuration-Tutorial-Updated-Regularly-180x180.png)